Experiment troubleshooting

Contents

This page covers troubleshooting for Experiments. For setup, see the installation guides.

Have a question? Ask PostHog AI

How do I use an existing feature flag in an experiment?

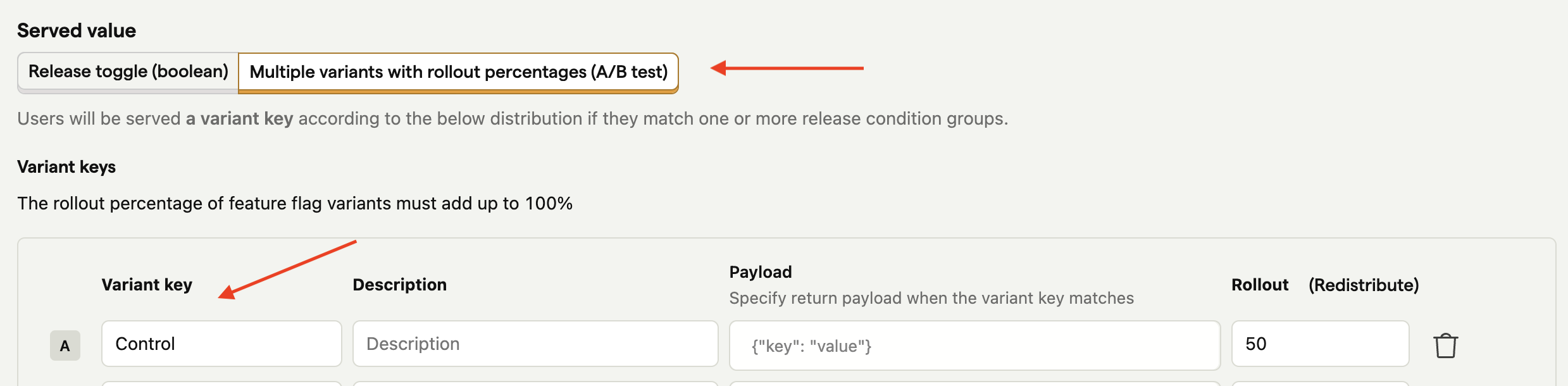

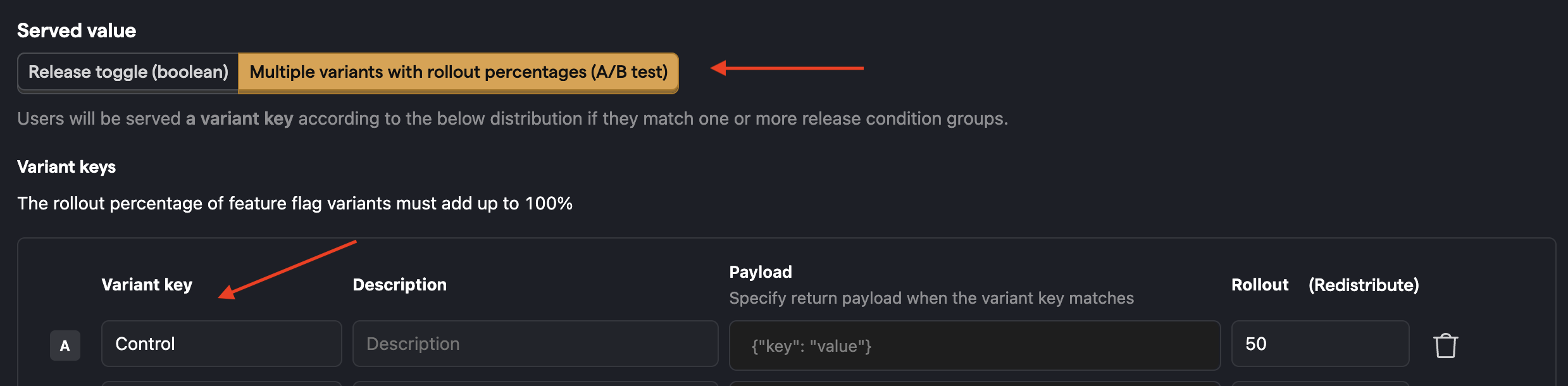

We generally don't recommend this, since experiment feature flags need to be in a specific format (see below) or otherwise they won't work.

However, if you insist on doing this (for example, you don't want to make code change), you can do this for feature flags with at least 2 variants by doing the following:

- Delete the existing feature flag you'd like to use in the experiment

- Create a new experiment and give your feature flag the same key as the feature flag you deleted in step 1.

- Name the first variant in your new feature flag 'control'.

Note: Deleting a flag is equivalent to disabling it, so it is off for however long it takes you to create the draft experiment. The flag is enabled as soon as you create the experiment (not launched).

How do I run a second experiment using the same feature flag as the first experiment?

End the first experiment, then create a new experiment and select the existing flag. You can also duplicate the experiment, which preserves your metric setup and lets you choose between reusing the same flag, selecting a different existing flag, or creating a new one.

Why am I getting a feature flag validation error when creating an experiment?

Experiments require feature flags to meet specific requirements. You may see validation errors if your feature flag doesn't meet them:

"Feature flag must have at least 2 variants (control and at least one test variant)": Experiments need at least two variants: a

controland at least one test variant. Single-variant flags cannot be used for experiments."Feature flag must have a variant with key 'control'": One of your variants must have the key

control. This is used as the baseline for comparison.

How can I run experiments with my custom feature flag setup?

See our docs on how to run an experiment without using feature flags.

How do I assign a specific person to the control/test variant in an experiment?

Once you create the experiment, go to the feature flag, scroll down to "Release Conditions". For each condition, there is an "Optional Override". This enables you to choose a release condition and force all people in this release condition to have the variant chosen in the optional override.

My feature flag called events don't show my variant names

Feature Flag Called events showing None, (empty string), or false instead of your variant names has three potential causes:

The flag matched no release conditions (value:

false). Your feature flag did not match any of the rollout conditions, and all release conditions were evaluatable. The SDK can conclusively determine the flag is off, so theFeature Flag Responseproperty will befalse.The flag is disabled or failed to load (value:

Noneor(empty string)). The variant names will show asNoneor(empty string)if this is the case. This can happen due to a network error, adblocking, or something unexpected.(empty string)also appears when some of the events for an experiment lack feature flag information.The flag wasn't fully evaluatable locally (value:

None). When using local evaluation, if any of the flag's release conditions depend on data that isn't available locally (such as person properties that haven't been provided to the SDK), the SDK cannot conclusively determine whether the flag should be on or off. In this case, the flag is omitted from the event entirely and appears asNone. This is different from case 1: afalsevalue means the SDK had enough information to determine the flag didn't match, whileNonemeans the SDK didn't have enough information to make a determination at all. For example, if you remove a release condition that used a non-locally-available property, the flag may change fromNonetofalsebecause the remaining conditions are now all locally evaluatable.

Why are returning users not showing as exposed to my experiment?

The $feature_flag_called event is deduplicated by the SDK to avoid sending redundant data. In the web SDK, this deduplication is per identity by default. Once emitted for a given flag and value, the event is cached across sessions and won't fire again unless identify or reset is called. If a user checked the feature flag before your experiment launched, the event was already cached and won't be re-emitted when the experiment starts. As a result, returning users may not appear as exposed.

Note: Mobile SDKs (iOS, Android, React Native, Flutter) deduplicate per session by default, so this issue does not apply to them.

To fix this in the web SDK, enable advanced_feature_flags_dedup_per_session in your PostHog config:

When enabled, the deduplication cache resets with each new session, so $feature_flag_called is re-emitted for every flag checked in that session. This is especially useful when you launch an experiment on a feature flag that was already being evaluated.

Why are my A/B test event numbers lower than when I create an insight directly?

Experiment results only count events that include the experiment's feature flag data. Sometimes, when you capture experiment events, the flags are not loaded yet. This means users don't see the experiment, their events won't have the flag data, and they are not included in the results calculation.

By default, insights count all the events, whether they include flag data or not. This is why they show a higher number. To confirm this, break down an insight by your experiment's flag and check the number of events with the value None.

A situation where this happens is using pageviews as your goal metric. Because pageviews are captured as soon as PostHog loads, the flag data may not have loaded yet, especially for first time users where flags aren't cached. Thus, the pageview count in insights might be higher than in your experiment.

To fix this, you make sure flags are immediately available on a page load. There are two options to do this:

- Wait for feature flags to load before showing the page (low engineering effort, but slows page down by ~200ms).

- Use client-side bootstrapping (high engineering effort, but keeps the page blazing fast).

Why am I seeing unexpected results in my A/A test?

If you're running an A/A test (where both variants are identical) and seeing significant differences between variants, there are a few things to check:

Feature flag calls: Create a trend insight of unique users for

$feature_flag_calledevents and verify they are equally split between variants. An uneven split suggests issues with flag evaluation.Implementation verification:

- Use feature flag overrides (like

posthog.featureFlags.overrideFeatureFlags({ flags: {'flag-key': 'test'}})) to test each variant - Check the code runs identically across different states (logged in/out), browsers, and parameters

- Verify that user properties and group assignments are set correctly before flag evaluation

- Use feature flag overrides (like

Session replays: Watch session recordings filtered by your feature flag to spot any unexpected differences between variants.

Random variation: While A/A tests should theoretically show no difference, random chance can sometimes cause temporary statistical significance, especially with smaller sample sizes.

Remember: A successful A/A test validates your experimentation setup, while an "unsuccessful" one helps identify issues you can fix to improve your process. If you're still seeing unexplained significant differences, contact support for help troubleshooting.

Why do I need a minimum number of exposures to run an experiment?

Experiments require a minimum of 50 exposures per variant before showing experiment results. This is necessary because, with too few exposures, the results may not be statistically significant and could lead to incorrect conclusions. This threshold ensures that the experiment data is reliable enough to make a decision.

You can check your current exposure count on the experiment results page. If your experiment is not reaching the minimum number of exposures, you can try the following:

- Verify your feature flag implementation.

- Consider increasing your feature flag's rollout percentage.

- Ensure your exposure events are firing correctly.

Diagnosing sample ratio mismatch (SRM)

When PostHog flags SRM on your experiment, check these causes in order. They're ranked by frequency:

Bot traffic. Bots that trigger server-side flag evaluations are full experiment participants. They hash deterministically into one variant, skewing the split. Fix: enable the CDP Bot Filter Transformation in Settings → Data pipeline → Transformations.

Flag condition changes. Did someone modify the flag's release conditions or rollout percentage after launch? Check the flag's activity log. Even API-level changes count. The UI locks experiment-linked flags, but the API doesn't enforce the same restrictions.

Identity fragmentation. The same real user with two unlinked distinct IDs appears as two participants, potentially in different variants. Check your

identify()flow. See identity resolution.SDK dedup cache overflow. Server-side SDKs (Node, Python) dedup exposure events in-memory with a 50,000 entry cache. If your server handles more than 50k distinct flag+value combinations between restarts, earlier entries are evicted and those users may fire duplicate exposures.

Normal early variance. With fewer than ~1,000 exposures, a 55/45 split on a 50/50 experiment is statistically normal. Hash-based randomization converges with larger samples. If SRM fires early and your sample is small, wait.

Common issues and self-diagnosis

Users appearing in both control and test

This is an identity problem. The user has two distinct IDs that haven't been linked. PostHog sees two separate persons, each correctly assigned a variant.

Check: Are you calling identify() before or after the flag evaluation? Is the same stable ID used in every SDK that evaluates this flag? Did you call reset() somewhere that fragmented the session?

Variant flipped mid-session

Something changed in the evaluation inputs. Common causes: identify() called after the flag was already evaluated with an anonymous ID. SDK cache cleared (by reset(), page reload without bootstrapping, or server restart). Release conditions modified on the flag while the experiment was running.

Check: Pull both $feature_flag_called events for the user and compare the distinct_id, timestamp, and person properties.

Unexpected exposure numbers

The flag is being evaluated in a context you didn't expect. Server-side evaluation without bot filtering means bots are participants. getAllFlags() in your code means exposures aren't being recorded. Multiple SDKs evaluating the same flag with different distinct IDs means duplicate exposures.

Check: Search your codebase for every place the flag key appears. Is every evaluation using getFeatureFlag() or the server-side evaluateFlags API? Is the distinct ID consistent? Is bot traffic filtered? See which SDK methods trigger exposure.

Metric shows all "none" values with a property breakdown

The property doesn't exist on the event you're measuring.

Check: Go to Data Management → Events → click the event → Properties tab. If your property isn't listed, your instrumentation needs to include it.