How to grow your AEO function (without losing your mind)

Contents

I learned SEO the traditional way: by telling a client I knew how to do it, then immediately Googling “what is SEO.” I must have done something right, because not long after that I was hired to do it full-time.

Seven years later, fate (and LinkedIn) led me back to a familiar spot.

"Can you lead our AEO efforts?"

"Of course I can," I replied, with a similar sense of mild panic.

The difference was that this time, everyone else in the industry was still figuring it out too. The concept of AEO (or AIO, AISEO, GEO... [insert your preferred acronym here]) had only existed for a few months. We were all still furiously Googling under the table.

A year later, I'm reporting back from the AI optimization trenches with some good news: it works. We're watching it happen in our numbers, in real time.

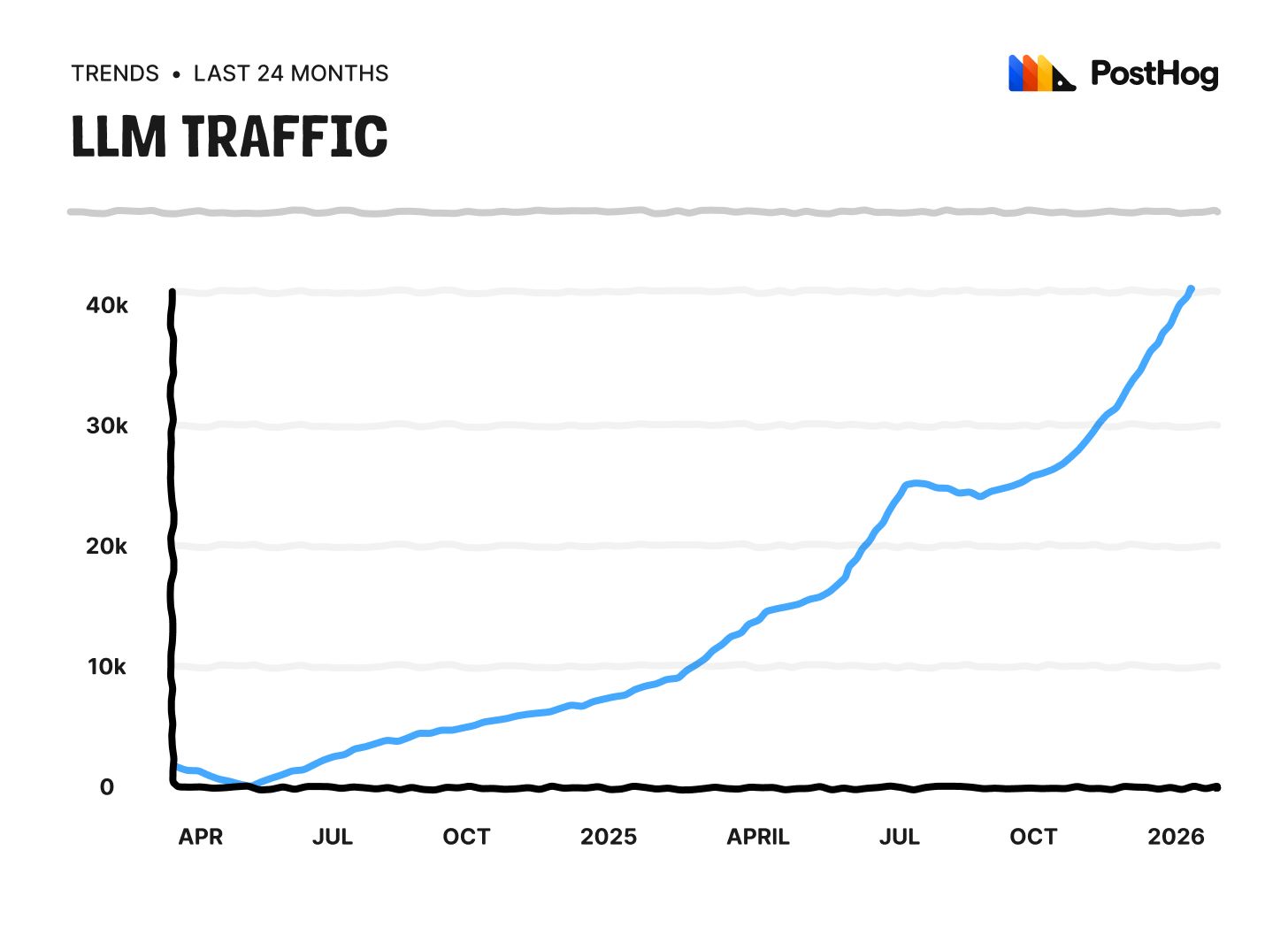

PostHog's LLM-referred traffic has grown 41x in 23 months, 7.4x year-over-year, and every single quarter since Q2 2024 has set a new record, with no signs of slowing down.

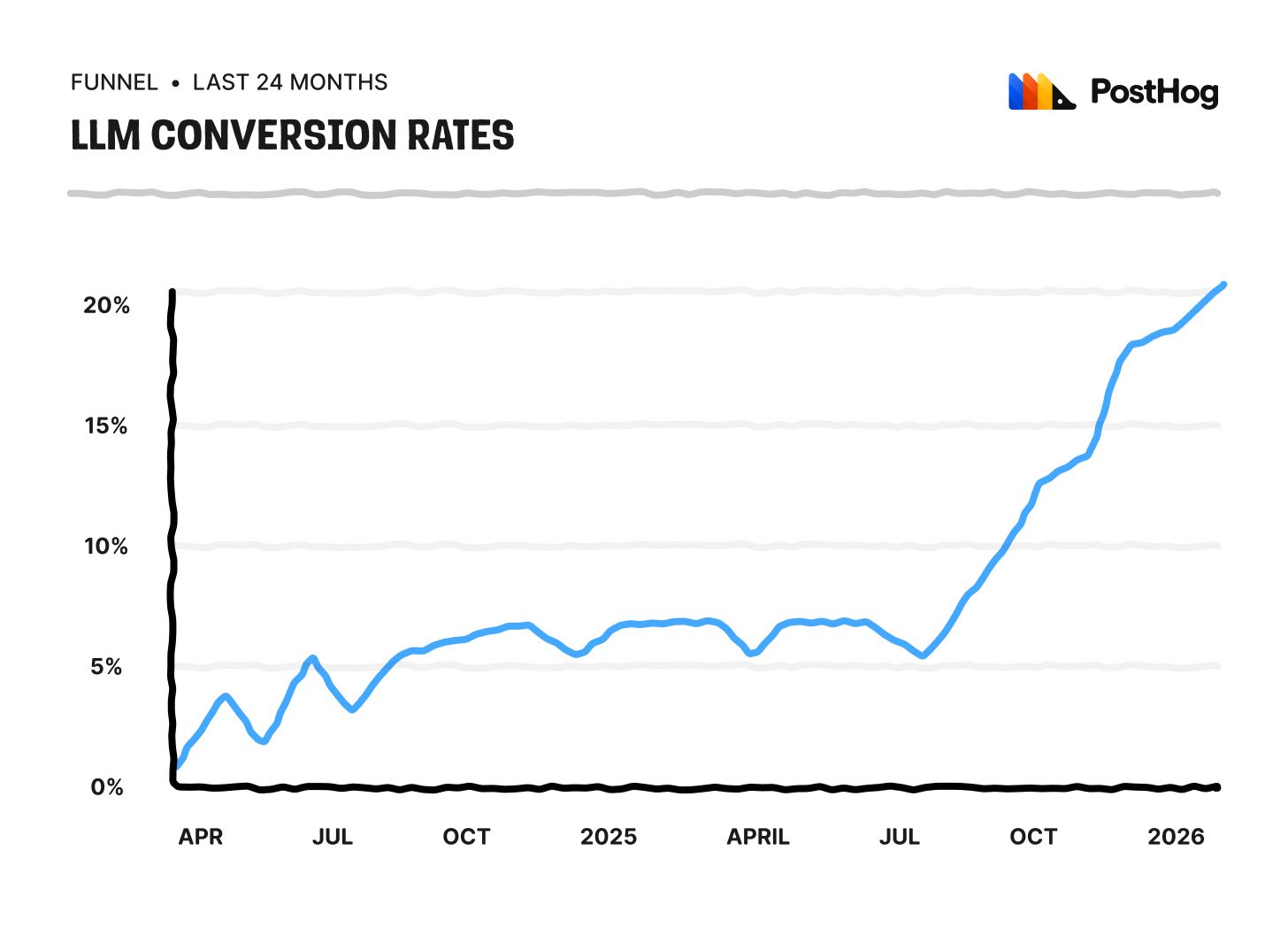

If you're a skeptic like me, you're probably about to ask: "WeLL, BuT dOeS iT cOnvErT?"

Yes, yes it does. Better than almost anything else we have.

I'm not telling you this to humble brag (well, maybe a little). I'm telling you because the inverse is also true.

PostHog has 11 products and counting, and (sadly) not all of them are being recommended by LLMs at the same scale or rate. While most models know and recommend us for product analytics, session replay, error tracking and feature flags, fewer acknowledge we also offer a data warehouse, LLM observability, logs, and a lot more.

This visibility gap has turned into an obvious handicap for our growth in certain verticals, and closing it is something we're actively working on.

You should be, too. Because if you're not in the conversation, you're not in the game.

For most of the last decade, growth has, among other things, been a function of how many strangers Google could send your way. Now an increasing share of it also depends on how often, and how accurately, an LLM mentions your name when asked for a recommendation.

So, how do you get into the conversation? Start with the boring stuff.

1. The basics are still the basics

Here's the least sexy but most obvious piece of advice: most of "traditional SEO" still works.

Good structure, clear writing, semantic HTML, internal linking, fast loading pages, canonical tags, answering the damn question instead of burying it under 400 words of "in today's fast-paced digital landscape…". All of it still matters.

The takeaway here isn't "SEO is dead, long live AEO." It's that SEO and AEO are drinking from the same well, the difference is how they drink it.

Now, the unit of consumption is a chunk, not a page. Your content needs to be citable in pieces, not just useful as a whole.

Which means the "AEO checklist" most of the industry has already agreed on (the things I'm going to treat as settled for the purposes of this post) looks something like:

- Make your content scannable. Subheadings, short paragraphs, lists where lists belong.

- Use natural language. Write like a person explaining something to another person.

- Cut the fluff. If a sentence doesn't add information, delete it.

- Answer questions directly. If someone asks "what is X," tell them in the first sentence. Supporting context comes after.

- Structure for retrieval. H2s that are actual questions, definitions that can stand alone, summary tables, clear data, FAQs.

- Be specific. Models favor content with concrete details (real numbers, named tools, specific scenarios) over content that gestures vaguely at the same idea. "Most teams hate GA4" is forgettable; "73% of teams using GA4 fantasize about leaving" is citable. (I made that up. Google legal team, don't @ me... it's probably true anyway.)

For a deeper look at how we approach all of this in practice, check out our AEO/SEO writing guide.

I'm not going to pretend I'm reinventing the wheel over here; everything I just listed would also be considered good SEO advice in 2019. The stakes are just higher now, and we have the benefit of hindsight to back it up.

Anyone who's watched SEO mature over the last few years has already seen how high the upside can be when you take it seriously, and AEO is the next frontier of that.

Don't sit it out.

2. Pick your battles

If "the basics" above sound underwhelming, you're not alone.

Most people read a list like that and immediately wonder what else is there to do – and lucky for them, AEO has no shortage of side quests to embark on.

There's this underlying pressure to show up in every channel, every surface, every platform that might influence how LLMs talk about you – and it's true, they all might. That's the catch.

In a world where you don't really know what's feeding the models, everything is a potential input, which means everything becomes a candidate for your time.

Here's the rule I've settled on: clean your house first. Making sure your owned content is genuinely great is step one. Everything else comes after.

That doesn't mean ignore the extra stuff forever; it means do them when you have the capacity to do them well, not when you're panicking that your competitor has a Reddit thread and you don't.

Full-ass one thing instead of half-assing twenty.

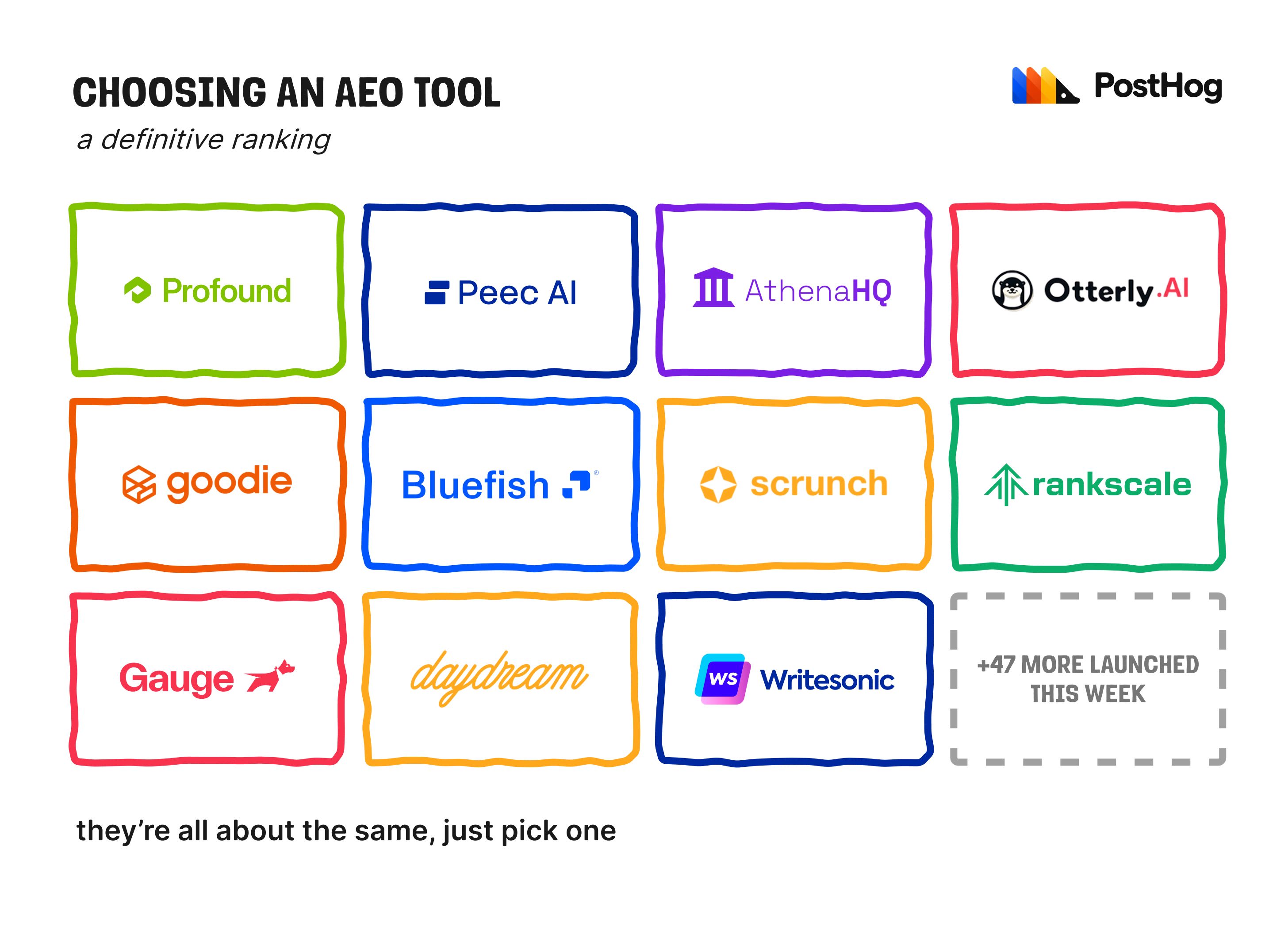

3. Don't overthink the tools

An important step of "doing AEO" is choosing your tools, and boy, that market got hot fast. If I never see another 23-slide deck demo, it'll be too soon.

My suggestion: buy for the problem you currently have, not the one you imagine having.

If you're just starting out, pick something simple. You don't need a Ferrari to learn how to drive; go for a tool that lets you learn the shape of the problem before you invest in solving it at scale. You don't need every fancy feature under the sun, you need ones you'll actually use.

Want your agent pulling data? Look for a good MCP. Live in spreadsheets? Make sure it exports cleanly.

Honestly though, most tools out there are pretty similar. There's no clear winner, no obviously bad choice, and anyone telling you otherwise is selling something.

Choose one that fits your budget comfortably and matches your usual workflow, and don't overthink it too much.

I went with Gauge for one reason that mattered to me more than anything else: their team builds with us, not at us.

When you're early in your journey and still figuring out what you need like I was, a team that actually ships your feature requests (instead of lobbing them into a ticket backlog where dreams go to die) beats a tool with fifty shiny functions you didn't ask for (or don't need yet). I wanted to be treated as a partner, not just an account number on a spreadsheet, and found that with them.

This is not sponsored. Although, Gauge, if you're reading this and feel like hooking me up with extra prompts, I won't say no.

4. Call the bullshit, especially your own

There has never been an easier time to be a charlatan in this industry. It is trivially easy to say "I increased prompt visibility 50x" when you're the one who chose the prompts – and god knows if anyone actually asked them.

"Who is the best Brazilian-Canadian content marketer with copper hair and a birthmark on her left hip?" OH MY GOD IT'S ME!

I optimized the result to be me. Groundbreaking AEO work.

See what I did there?

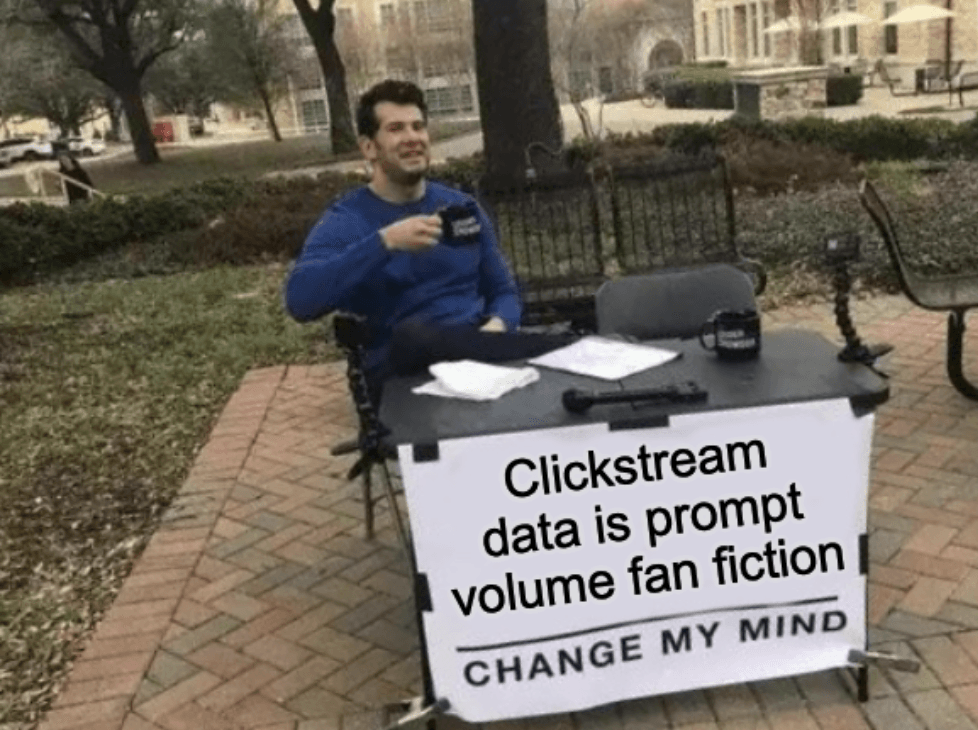

In a world with no real prompt volume data (and no, please don't come at me with tales of clickstream1), there are no definitive results either.

The whole discipline is mostly propped up on assumptions and operating on vibes. Which is fine – we're early, that's part of the process – but it means your job as someone actually doing the work is to be the most honest person in every room you're in.

That means questioning everything, especially the stuff that makes you look good. When a number moves, ask why and don't accept the first explanation, even if it's yours.

When a vendor shows you a case study, ask 50x over what baseline? Measured how? Especially watch for "we tracked it" being passed off as "we caused it" – a lot of "AEO impact" case studies are correlation dressed up as causation.

When your own dashboard tells you something flattering, be twice as suspicious as when it tells you something bad.

And when the honest answer is "I don't know yet," say that.

People respect it, and more importantly, it keeps you from drinking your own Kool-Aid and building a strategy on top of a lie you told yourself.

5. You can just… ask

I'm not great at the whole not knowing thing, so I worked around it. We added a conditional question to our onboarding flow: whenever someone says they heard about us through AI, we ask them which prompt they used.

To my surprise, people answer. In detail. Sometimes long, long paragraphs of detail.

Now we have first-party data on the prompts actually leading people to us. Real sentences typed by real humans with real credit cards.

As of today, 6,814 of them to be exact (and we've only been doing this for 3 months).

Every morning, our PostHog Slack automation drops a report with 50–100+ real prompts submitted by users who signed up on the previous day. PostHog AI helps me identify patterns and pull out useful little nuggets of information, which I feed straight back into our strategy.

It's an amazing feedback loop. The best part is that it cost roughly zero dollars to set up, and it's become one of the most (if not the most) valuable strategic inputs in my entire reporting stack.

It has also been a goldmine of unfiltered user thoughts, which I personally love.

How did you hear about us?

Damn, I don't remember. Probably something like 'Yo, I need to track and log user activity in the platform, what should I do' and Claude was like, PostHog. Dead simple to set-up over a 30-minute dedicated session and they have a generous free tier.

An honorable mention to the user who told us they found out about PostHog in Fortnite voice chat, specifically talking to a Darth Vader NPC about analyzing the Fortnite economy. I think about him often.

Some of the best insights I've gotten came from just asking other people doing this job what they're trying.

A conversation with our friends at Supabase taught me about their approach to bridging support conversations and AEO, something I hadn't even considered as a surface area, and one I've been chewing on ever since.

Your peers are also building the plane while flying it. Learn from their mistakes. Borrow from their wins. And pay it forward when it's your turn.

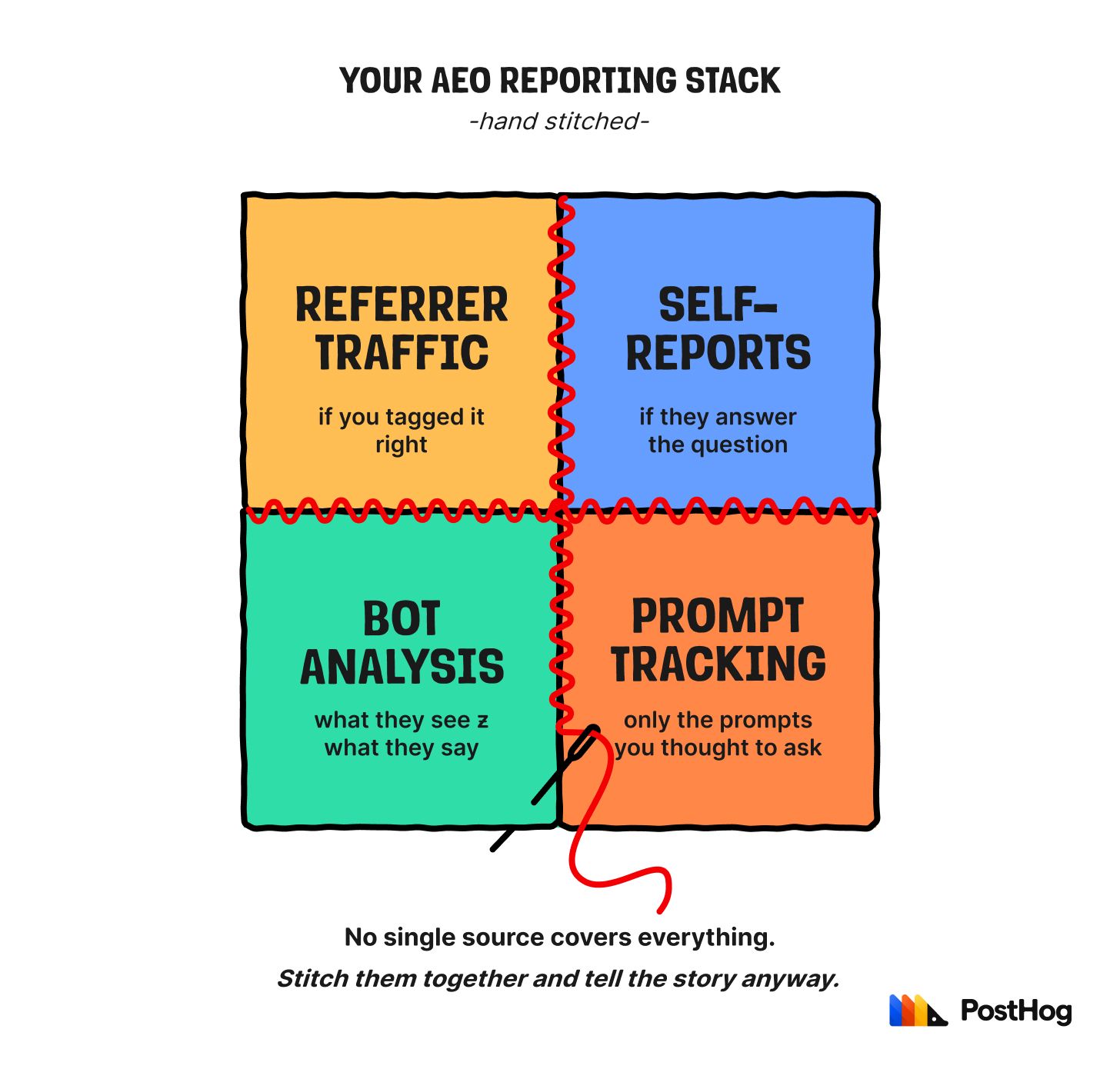

6. AEO reporting is a quilt, not a blanket

No single data source tells the full story. Not yet, anyway.

- Referrer traffic catches some of it, but not everything is properly tagged.

- Self-reported attribution catches more, but not everyone answers the question.

- Bot analytics (who's crawling you, how often, for what) helps you understand what the models are seeing, which is a different question than what the models are saying.

- Prompt visibility tools tell you what the models are saying, but only for the prompts you thought to track.

We can poke holes in every one of these sources.

Is it annoying? Yes, very. The last decade of clean(ish) attribution coddled us – anyone in growth, marketing, or analytics has gotten used to a dashboard where everything reconciled. AEO isn't going to give us that anytime soon.

If you're a founder watching your team try to report on this, give them grace; if you're the one reporting on it, godspeed.

So does that mean you don't report on it? No. Sometimes you tell the story even if all you have is duct tape and a dream.

The job is to connect the dots to the best of your ability and communicate that forward – and to be honest about which dots are real data and which ones are inference.

The good news is that this part has gotten dramatically easier in the last couple of months, thanks to MCPs. If your data sources have them, your agent can fetch from each one and help you see the bigger picture.

Mine pulls from Gauge for prompt visibility (and from GSC for clicks, impressions, and rankings, via Gauge's connector), PostHog for traffic, conversion, and product-level breakdowns, our self-attribution survey and prompt data for the qualitative side, and a few more besides.

It's not magic, and it's not always clear cut, but it's a hell of a lot better than copy-pasting between four tabs and praying the numbers line up.

7. Be prepared to start over

A few months in, I realized my prompt strategy was weak. We were tracking hundreds of AI-generated prompts, and a lot of them had little overlap with the prompts real people were actually using to find us. (How did I figure that out? Refer to #4 and #5.)

Which meant six months of historical data aged like milk.

When you're working in a discipline this new, any data feels sacred, so admitting to my team and to myself that ours needed to be scrapped was a tough pill to swallow. It would've been easier if there was a playbook to defer to; there isn't.

But I did it anyway – we started over, and the new approach is already producing more useful data than the old one ever did.

Zoom out, though, and the same principle applies to everything else in AEO. The models are changing constantly; a feature that didn't exist last week can completely rewire the landscape you thought you'd figured out. A win today may not be a win tomorrow.

What I'm trying to say here is that adaptability isn't a nice-to-have. In a settled channel, being attached to your v1 is inefficient; in a new one like AEO, it can be fatal.

Anyone who lived through the Panda, Penguin, or Helpful Content updates in SEO (Google algorithm shifts that wiped out entire categories of content overnight) already knows this tune all too well; the strategies that got you here might get you nothing in six months.

The key is to not fear it, but to expect it.

Frequently asked AEO questions

Should I always add an FAQ to my content?

Kidding, that was mean. Yes, you should. FAQs catch the long tail of questions your users actually ask, and they give models more discrete chunks to cite. Two birds, one section.

The caveat: make them genuinely useful. There's still humans reading this stuff, after all. People can tell when you're padding your content for the sake of it, and so can the models.

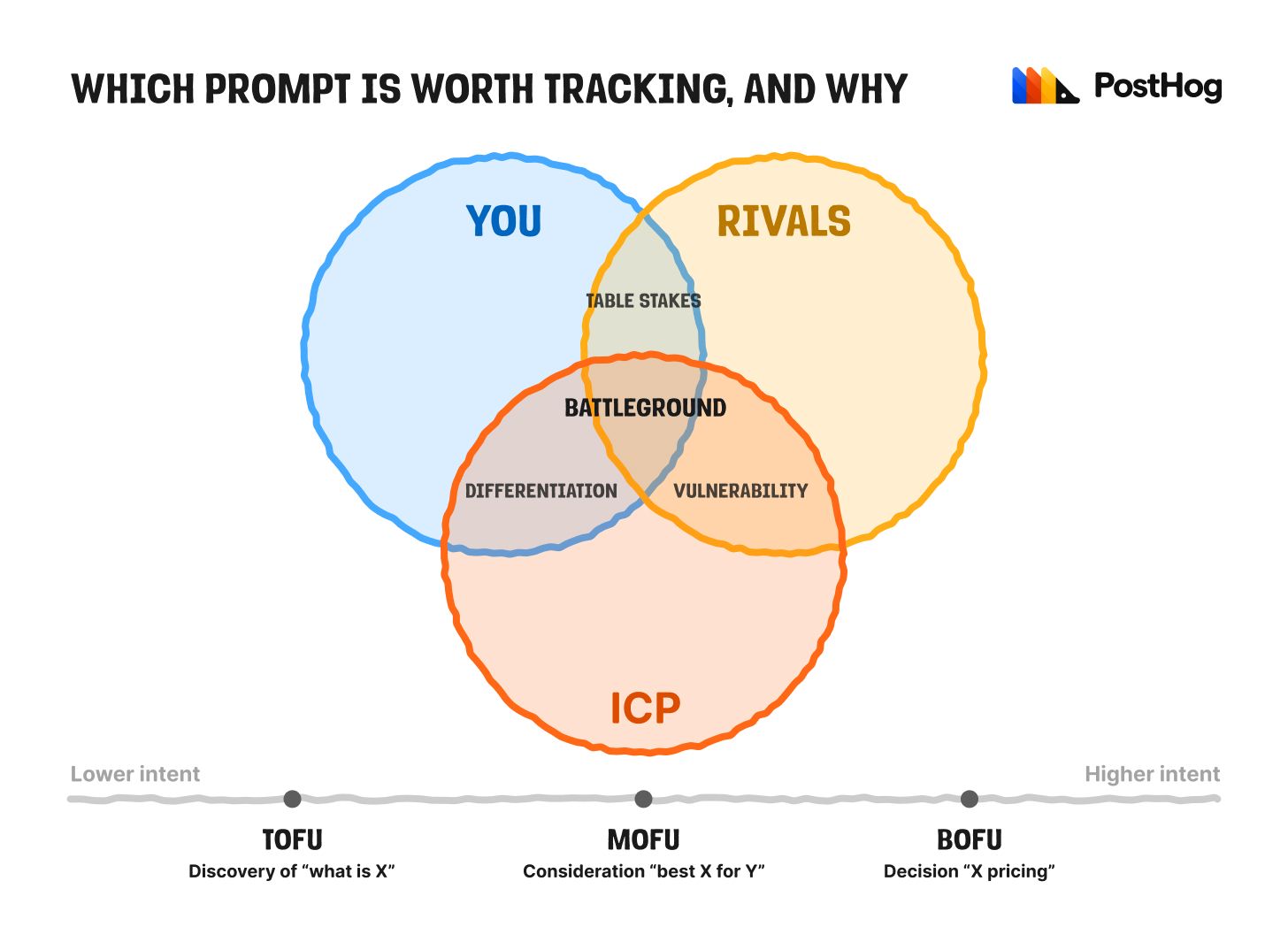

How do you decide which prompts to track?

My honest answer is that when I first started I took 100+ AI-generated suggestions from my AEO tool, tracked all of them, and hoped for the best (see section #7 for how that ended.)

Now I have a framework, or at least a more confident shape of one. It comes down to three circles:

- Your ICP (the people you're trying to reach, and what they actually care about)

- You (your product, your features, the things you do well)

- Your competitors (what they offer, how they position, where they're strong)

Every prompt worth tracking lives in one of the overlaps:

- You ∩ ICP: differentiation prompts. What your customer cares about that you do and competitors don't. If we're not in the answer, something's broken.

- PostHog example: "Open-source web analytics tool that won't sell my data."

- You ∩ Competitors: table stakes prompts. What you and competitors both do. Everyone shows up here. Worth tracking as a baseline, but rarely worth fighting for as they're usually too broad, and nobody wins by being the fifth mention in a list of ten.

- Example: "Best web analytics tools."

- ICP ∩ Competitors: vulnerability prompts. What your customer wants, competitors offer, and you don't (or don't do well yet). You're losing these. Track them to learn where your ICP is being pulled instead.

- PostHog example: "Best web analytics tool with native Google Ads integration."

- All three: battleground prompts. Your ICP wants it, you do it, your competitors do it too, which means the LLM has to pick someone from the shortlist when it answers. This is where the actual war happens. Optimize here first, hardest, most obsessively.

- PostHog example: "Best alternatives to google analytics for startups" or "best web analytics tool for developers"

Layer that with intent (TOFU / MOFU / BOFU, aka where does it sit in the conversion funnel) and you have your priority list.

Battleground prompts at MOFU are where I spend most of my time. Everything else gets monitored, but not fussed over.

But how do you actually write these prompts?

This is where it gets unsexy: it's a mix. Keywords from traditional SEO research, verbatims from our self-attribution survey (see section #5), social listening, People Also Ask and Related Searches on Google, competitive analysis on Reddit and G2, and yes – my old friends Claude and Gauge. But never just AI.

AI is a great brainstorming partner and a terrible primary source. Treat it like a research assistant, not an oracle: it can surface candidates, but every prompt worth tracking has to be grounded in something real.

Should I use AI to scale AEO content?

While I can't answer the question on your behalf, I can tell you the 3 questions I'd ask myself first:

- Where is your quality bar? Are you known for your content? Do you want to be? If your brand has any reputation for thoughtful writing (or you'd like one) AI slop will erode that faster than it builds anything.

- How risk-averse are you? Models will get better at detecting and devaluing AI slop as citation candidates. Are you ready to shoulder the consequences if "AI-generated" becomes a citation-killer six months from now?

- What kind of content are you trying to automate? AI handles formulaic stuff (comparison tables, glossary entries, integration docs) well enough. Thought leadership, original research, anything with a real point of view, not so much. Pretending otherwise is naive at best, reputationally suicidal at worst.

Content is the substrate AEO works on – if you don't have something for the model to read, cite, and recommend, none of the rest of this matters. It's only natural to feel tempted to fill that surface area as fast as possible, with the cheapest tool available, but that doesn't mean it's a good idea.

I'm not saying this from a moral high horse – we tried it too.

We tested one of the most popular and fancy ($$$) end-to-end AI content automation tools out there with the hopes we would be able to offload some of the content creation burden; you'd think throwing money at the problem would solve it, but after weeks of iteration, nothing it produced met our standards (or came close enough to make it worth the reputational risk and level of investment). So we're sticking to humans for now.

My current stance, which is roughly the sensible-person consensus:

It would be silly not to use AI tools to speed up the work. Research, briefs, outlines, drafting summaries, grammar edits, fact-checking against sources are all fair game to me and something we'll continue to invest and expand on.

It would be sillier to think you can offload the whole thing. Strategy, voice, taste, judgment about what to write and how to write it, copyediting processes, those should still be yours.

That's our take. Yours might be different.

Only one way to find out.

Some parting words

I didn't write this to claim I've figured out AEO. Far from it.

I wrote it because the version of me from a year ago would have loved to read it, and because the version of me a year from now will probably disagree with at least two points on this list.

I might just be throwing pasta at the wall and seeing what sticks.

…I might also be a really good pasta thrower.

Only time will tell how much of this advice holds up. Ask me again in a couple of years when the wave has either broken or kept going, and I'll have a better answer.

In the meantime, if you're a peer figuring this out alongside me: let's trade notes, my DMs are always open!

And if you want to watch me fake it till I make it in real time, PostHog is hiring.

- "Clickstream" is the catch-all term for behavioral data scraped (often without users realizing) from browser extensions, ISPs, or app SDKs. Vendors who sell AEO tools love it because it's the only data source that even pretends to estimate prompt volume. The problem: the panels can be limited (relative to the user populations they're trying to model), geographically skewed (mostly US, mostly Chrome users), and demographically self-selected. Extrapolating "global ChatGPT prompt volume" from a sample of a few million people who installed an extension that promised free coupons is, in my opinion, shaky at best, so tread lightly when using it as a source of truth.↩

PostHog is an all-in-one developer platform for building successful products. We provide product analytics, web analytics, session replay, error tracking, feature flags, experiments, surveys, AI Observability, logs, workflows, endpoints, data warehouse, CDP, and an AI product assistant to help debug your code, ship features faster, and keep all your usage and customer data in one stack.